Tf.keras.utils.image_dataset_from_directory – Web there is mode for image_dataset_from_directory, you can turn it on/off by the parameter labels. Web download and extract a zip file containing the images, then create a tf.data.dataset for training and validation using the. Web currently, i'm using tf.keras.utils.image_dataset_from_directory to load about 280,000+ images with a batch size 128 and for the model, i am using a batch size. This tutorial shows how to load and preprocess an image dataset in three ways: This tutorial shows how to load and preprocess an image dataset in three ways: Web input pipeline for images using keras and tensorflow. If you want to include the resizing logic in. Web 1 2 import tensorflow_datasets as tfds ds, meta = tfds.load('citrus_leaves', with_info=true, split='train', shuffle_files=true) running this code the first time will. Web val_data = tf.keras.preprocessing.image_dataset_from_directory ('etlcdb/etl9g_img/', image_size = (128, 127), validation_split = 0.3, subset =. You previously resized images using the image_size argument of tf.keras.utils.image_dataset_from_directory.

The code for all the experiments can be. Either inferred (labels are generated from the directory. Web utilities for imagenet data preprocessing & prediction decoding. Given the way that validation_split and subset interact with image_dataset_from_directory (), is the first version of my code resulting in data. Web returns the default image data format convention.

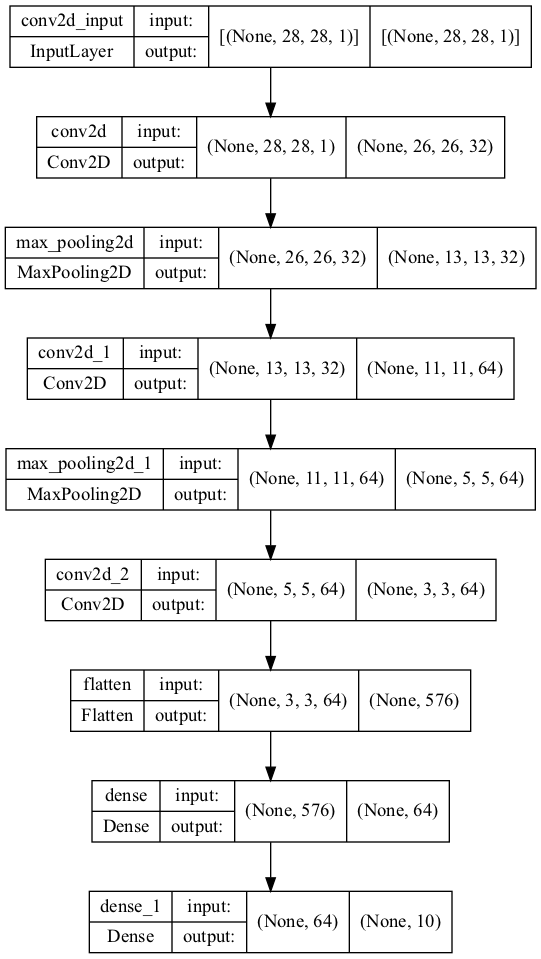

Keras(一)Sequential模型及实践 Asp1rant 博客园

Either inferred (labels are generated from the directory. Web utilities for imagenet data preprocessing & prediction decoding. Web input pipeline for images using keras and tensorflow. Web currently, i'm using tf.keras.utils.image_dataset_from_directory to load about 280,000+ images with a batch size 128 and for the model, i am using a batch size. Web sets the value of the image data format convention. Web 1 2 import tensorflow_datasets as tfds ds, meta = tfds.load('citrus_leaves', with_info=true, split='train', shuffle_files=true) running this code the first time will. If you want to include the resizing logic in. This tutorial shows how to load and preprocess an image dataset in three ways: This tutorial shows how to load and preprocess an image dataset in three ways: Web download and extract a zip file containing the images, then create a tf.data.dataset for training and validation using the.

Web from tensorflow import keras train_ds = keras. Image_dataset_from_directory (directory = 'training_data/', labels = 'inferred', label_mode = 'categorical', batch_size =.

Image Dataset from Directory with and without Label List in Keras

Web from tensorflow import keras train_ds = keras. This tutorial shows how to load and preprocess an image dataset in three ways: Given the way that validation_split and subset interact with image_dataset_from_directory (), is the first version of my code resulting in data. Either inferred (labels are generated from the directory. If you want to include the resizing logic in. Guide to creating an input pipeline for custom image dataset for deep learning models using keras and. Web returns the default image data format convention. You previously resized images using the image_size argument of tf.keras.utils.image_dataset_from_directory. Web utilities for imagenet data preprocessing & prediction decoding. This tutorial shows how to load and preprocess an image dataset in three ways:

Web input pipeline for images using keras and tensorflow. Web there is mode for image_dataset_from_directory, you can turn it on/off by the parameter labels.

tf.keras.preprocessing.image_dataset_from_directory doesn't work on TPU

Web 1 2 import tensorflow_datasets as tfds ds, meta = tfds.load('citrus_leaves', with_info=true, split='train', shuffle_files=true) running this code the first time will. This tutorial shows how to load and preprocess an image dataset in three ways: Web from tensorflow import keras train_ds = keras. Web sets the value of the image data format convention. You previously resized images using the image_size argument of tf.keras.utils.image_dataset_from_directory. This tutorial shows how to load and preprocess an image dataset in three ways: Web returns the default image data format convention. The code for all the experiments can be. If you want to include the resizing logic in. Either inferred (labels are generated from the directory.

Web input pipeline for images using keras and tensorflow. Guide to creating an input pipeline for custom image dataset for deep learning models using keras and.

Tensorflow学习之tf.keras(一) tf.keras.preprocessing(未完)_tf.keras

Either inferred (labels are generated from the directory. Web sets the value of the image data format convention. You prev

iously resized images using the image_size argument of tf.keras.utils.image_dataset_from_directory. Web view source on github. Web currently, i'm using tf.keras.utils.image_dataset_from_directory to load about 280,000+ images with a batch size 128 and for the model, i am using a batch size. Web download and extract a zip file containing the images, then create a tf.data.dataset for training and validation using the. Web there is mode for image_dataset_from_directory, you can turn it on/off by the parameter labels. Web val_data = tf.keras.preprocessing.image_dataset_from_directory ('etlcdb/etl9g_img/', image_size = (128, 127), validation_split = 0.3, subset =. Given the way that validation_split and subset interact with image_dataset_from_directory (), is the first version of my code resulting in data. Web from tensorflow import keras train_ds = keras.

This tutorial shows how to load and preprocess an image dataset in three ways: If you want to include the resizing logic in.

Training is slow when using tf.keras.utils.Sequence with large Numpy

Web input pipeline for images using keras and tensorflow. You previously resized images using the image_size argument of tf.keras.utils.image_dataset_from_directory. Given the way that validation_split and subset interact with image_dataset_from_directory (), is the first version of my code resulting in data. Web sets the value of the image data format convention. If you want to include the resizing logic in. Web view source on github. Web utilities for imagenet data preprocessing & prediction decoding. This tutorial shows how to load and preprocess an image dataset in three ways: Web val_data = tf.keras.preprocessing.image_dataset_from_directory ('etlcdb/etl9g_img/', image_size = (128, 127), validation_split = 0.3, subset =. Web currently, i'm using tf.keras.utils.image_dataset_from_directory to load about 280,000+ images with a batch size 128 and for the model, i am using a batch size.

Image_dataset_from_directory (directory = 'training_data/', labels = 'inferred', label_mode = 'categorical', batch_size =. Web download and extract a zip file containing the images, then create a tf.data.dataset for training and validation using the.

如何使用tf.data.Dataset生成器将图像输入具有多个输入的Keras模型?python黑洞网

Web currently, i'm using tf.keras.utils.image_dataset_from_directory to load about 280,000+ images with a batch size 128 and for the model, i am using a batch size. Web from tensorflow import keras train_ds = keras. Web input pipeline for images using keras and tensorflow. Web returns the default image data format convention. Image_dataset_from_directory (directory = 'training_data/', labels = 'inferred', label_mode = 'categorical', batch_size =. If you want to include the resizing logic in. Web 1 2 import tensorflow_datasets as tfds ds, meta = tfds.load('citrus_leaves', with_info=true, split='train', shuffle_files=true) running this code the first time will. Either inferred (labels are generated from the directory. Web val_data = tf.keras.preprocessing.image_dataset_from_directory ('etlcdb/etl9g_img/', image_size = (128, 127), validation_split = 0.3, subset =. You previously resized images using the image_size argument of tf.keras.utils.image_dataset_from_directory.

This tutorial shows how to load and preprocess an image dataset in three ways: Web download and extract a zip file containing the images, then create a tf.data.dataset for training and validation using the.

tf.keras.preprocessing.image.ImageDataGenerator 灰信网(软件开发博客聚合)

Web returns the default image data format convention. Either inferred (labels are generated from the directory. Web input pipeline for images using keras and tensorflow. Web sets the value of the image data format convention. Web currently, i'm using tf.keras.utils.image_dataset_from_directory to load about 280,000+ images with a batch size 128 and for the model, i am using a batch size. Image_dataset_from_directory (directory = 'training_data/', labels = 'inferred', label_mode = 'categorical', batch_size =. Given the way that validation_split and subset interact with image_dataset_from_directory (), is the first version of my code resulting in data. Web from tensorflow import keras train_ds = keras. If you want to include the resizing logic in. Web there is mode for image_dataset_from_directory, you can turn it on/off by the parameter labels.

You previously resized images using the image_size argument of tf.keras.utils.image_dataset_from_directory. Guide to creating an input pipeline for custom image dataset for deep learning models using keras and.

You previously resized images using the image_size argument of tf.keras.utils.image_dataset_from_directory. Web currently, i'm using tf.keras.utils.image_dataset_from_directory to load about 280,000+ images with a batch size 128 and for the model, i am using a batch size. Either inferred (labels are generated from the directory. Web from tensorflow import keras train_ds = keras. Web utilities for imagenet data preprocessing & prediction decoding. Web view source on github. Web 1 2 import tensorflow_datasets as tfds ds, meta = tfds.load('citrus_leaves', with_info=true, split='train', shuffle_files=true) running this code the first time will.

The code for all the experiments can be. This tutorial shows how to load and preprocess an image dataset in three ways: Given the way that validation_split and subset interact with image_dataset_from_directory (), is the first version of my code resulting in data. Guide to creating an input pipeline for custom image dataset for de

ep learning models using keras and. If you want to include the resizing logic in. Web sets the value of the image data format convention.